29 Apr AI-Powered Newsrooms; The Top Tools and Case Studies to Get you Started

Let’s start with a quick read of the room on how news organisations around the world feel about introducing generative AI into the newsroom. A clear overall picture emerges from the Reuters Institute for the Study of Journalism’s “Changing Newsrooms” report, published at the end of 2023. This report is based on a survey of 135 senior industry leaders from 40 countries and includes ten indepth interviews.

The survey sought opinions on how generative AI and workflow automation might impact newsroom jobs over the next decade. A significant 74% of respondents believe that “generative AI will help us do some things more efficiently, but the essence of what we do won’t change.” Meanwhile, 21% foresee that “generative AI will transform workflows and processes, fundamentally altering every role in the newsroom.” Only 2% think generative AI will leave news work unchanged, underscoring the widespread belief among newsroom leaders in AI’s potential industry impact.

Some key quotes from those surveyed:

“I hope it will make some tasks easier and more automatic, but the essence of our journalism will remain unchanged,” from the CEO of a Danish media company. “Generative AI will help us produce better articles, identify audience needs, and eliminate some redundancies. However, humans will still be essential for factchecking, quality control, and ethical adherence,” shared by the CEO of a digital company in Indonesia.

A senior editor from France noted, “Gen AI is less likely to assist in core journalistic missions like gathering original information, often from the field and human sources, checking and verifying information, and presenting a unique perspective in an original manner. However, it can enhance efficiency in some tasks, scale up content distribution, and support journalists in certain aspects of their work.”

Further insights into how newsroom leaders perceive the applications of generative AI come to us in the “Journalism, Media, and Technology Trends and Predictions 2024” report by Nic Newman for the Reuters Institute. The report is based on an industry survey of a strategic sample of more than 300 digital leaders from over 50 countries and territories.

In the survey, news executives prioritise back-end automation tasks, such as transcription and copyediting (56%), followed by recommender systems (37%), content creation with human oversight (28%), and commercial applications (27%). Other notable applications include coding (25%), where some publishers report significant productivity gains, and newsgathering (22%), where AI can support investigative reporting, factchecking, and verification.

Newman notes, “Back-end automation has gained increasing importance over time. Only 29% considered these AI applications very important when we posed this question in our survey two years ago. This heightened focus is partly due to the advent of large language models (LLMs) that have since emerged, offering numerous opportunities to accelerate and refine routine tasks in the newsroom.”

Importantly, amongst several prominent digital leaders surveyed, AI is not seen as a substitute for reporters or journalism itself. “The most compelling use case for AI in newsrooms is in the automation of routine tasks performed by editors, such as adding tags of SEO metadata,” says Ed Roussel, Head of Digital at The Times and The Sunday Times. “We do not believe that AI is a substitute for reporting stories, which will continue to be done by journalists.”

“In our survey, we observe that respondents perceive varying levels of risk associated with different AI applications. Content creation is identified as the area with the highest perceived risk (56%), followed by newsgathering (28%). In contrast, areas such as back-end automation (11%), distribution, and coding are seen as posing lower risks,” Newman remarks.

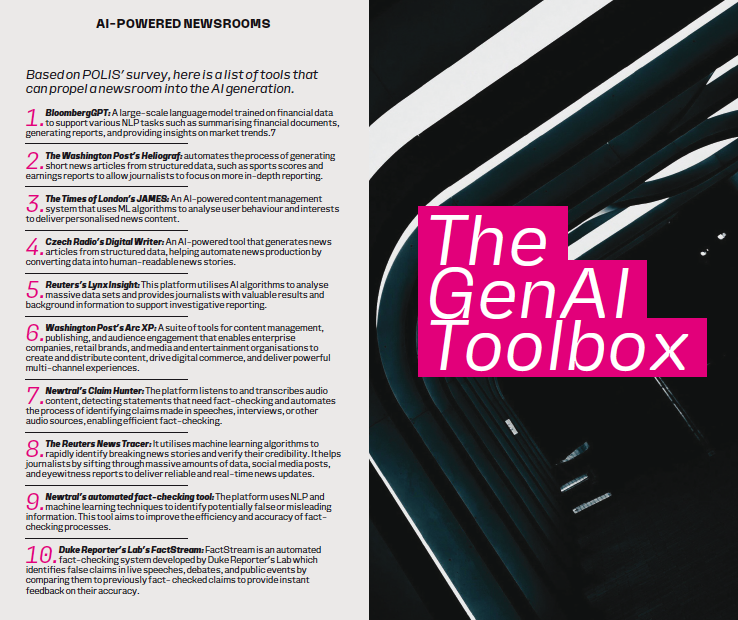

Finally, we’ll also refer heavily in this chapter to the work of POLIS, the journalism think tank at the London School of Economics and Political Science. Their report, “Generating Change: A global survey of what news organisations are doing with artificial intelligence,” gathers insights from over 120 editors, journalists, technologists, and media makers from 105 newsrooms across 46 countries. Among the key

findings are that a vast majority of newsrooms—90%—are already incorporating AI in news production, with 80% using it in news distribution

and 75% in news gathering. These applications range from automated transcription and translation, extracting text from images, and web scraping for news gathering, to translating articles, proofreading, writing headlines, or even composing entire articles for news production. Distribution efforts are enhanced by AI-driven search engine optimisation and content personalisation for specific audiences.

Despite the widespread adoption of AI tools, the report highlights a significant gap in strategic planning; only about onethird of surveyed newsrooms have an AI strategy in place or are in the process of developing one. This indicates a cautious approach towards integrating AI, reflecting concerns about its implications. Moreover, the intersection of AI applications across news gathering, production, and distribution underscores the shift towards more interconnected, “networked” or “hybrid” forms of journalism, marking a significant evolution in how news is created and shared in the digital age.

You’ve heard what newsroom leaders feel about bringing in AI, what its uses are so far and just how quickly it seems to have made a mark on the industry across several geographies and contexts.

Now the challenge is this: if you haven’t already started experimenting how do you do so? And if you have and want to learn about the best use cases from others what can you pick up and imitate or be inspired from? That’s what we’ll be diving into headlong here, listing out first some of the domains AI has been put to use in and enumerating the tools used in each. We have to note here, just to avoid confusion, that this list is a

combination of generative AI and some older’ automation or analytical tools, some of whose capabilities have been supercharged with generative AI.

Newsgathering

AI applications can assist newsrooms in gathering material from various sources and helping the editorial team gauge an audience’s interests as part of a data-driven production cycle. The responses to the POLIS survey revealed that a large majority, almost three quarters of organisations, use AI tools in newsgathering, in two main areas:

1- Optical Character Recognition (OCR), Speech-to-Text, and Text Extraction: AI facilitates the automation of transcription, extraction of text from images, and data structuring. Tools like Colibri.ai, SpeechText.ai, Otter. ai, and Whisper are streamlining speechto- text transcription and automated translation, enhancing content accessibility across languages.

2- Trend Detection and News Discovery: AI can analyse vast data sets to identify patterns and trends. Using tools such as Google Trends, web

scraping, and data mining services like Dataminr and Rapidminer, journalists can pinpoint trending topics and uncover stories. CrowdTangle aids in spotting viral social media posts, while speech-to-text algorithms monitor public discourse. The POLIS survey also highlights how

AI technologies are simplifying routine tasks like data classification and content organisation, with applications ranging from tag generation to automated chatbot responses.

Some initiatives involve collaboration with other organizations to develop specialised tools. One notable project involved partnering with the Organised Crime and Corruption Reporting Project (OCCR) to adapt their engine for Arabic content, showcasing the versatile applications of AI in journalism.

NEWS PRODUCTION

Nearly 90% of respondents in the POLIS survey reported using AI for tasks such as fact-checking, proofreading, trend analysis, and generating summaries. Tools like Grammarly are commonplace for enhancing the quality of content through spell checking and grammar corrections. Some innovative applications mentioned include:

Summarisation: Publications like Aftonbladet in Sweden and Helsingin Sanomat in Finland are adding summary bullet points to articles, increasingengagement, particularly among younger readers.

Headline Testing: Experimentation with AI to craft search-optimised headlines is underway, with human editors providing final approval.

Copyediting and Transcription: The adoption of AI for these tasks is growing, though not without impacting jobs. Axel Springer’s CEO mentioned that roles susceptible to automation, such as proofreading, are evolving.

Translation: Le Monde, for example, uses AI to translate articles into English, significantly increasing output with

human oversight for quality assurance.

Image Generation and Article Creation: Tools like Midjourney are used for creating illustrations, while the German tabloid Express.de has introduced a virtual journalist, Klara Indernach (KI), responsible for composing stories across various topics, with human editors overseeing the final content.

NEWS DISTRIBUTION

Approximately 80% of respondents in the POLIS survey have integrated AI technologies into their news distribution strategies. Though this figure is slightly lower than that for production, the variety of applications in distribution is notably broad. The primary goal behind leveraging AI for news distribution is to extend audience reach and enhance engagement. In fact, news distribution emerged as the sector most influenced by AI, with 20% of participants acknowledging its significant impact.

TARGETING SPECIFIC AUDIENCES

One trend underscored by Newman is the use of AI tools to adapt news content for specific audiences, enhancing relevance and comprehension. For example, Artifact, a social news reading app, showcases the potential of AI by summarising news in different styles,

such as simplifying an article from The Guardian for a five-year-old, tailoring it to the preferences of Gen Z, or converting it into a series of emojis. The proliferation of bots, apps, and browser extensions with similar functionalities is anticipated to accelerate in 2024.

Speech-to-text technology represents another facet of AI in distribution, optimising content across different mediums by converting text to audio, for instance.

Social media distribution also benefits from AI-powered tools like Echobox and SocialFlow, which streamline the scheduling of content across social platforms.

Additionally, the use of chatbots was highlighted for offering more personalised user experiences and improving response times.

SEO AND VISIBILITY

A critical aspect of digital content, especially for newsrooms, is enhancing visibility in search results. AI-driven SEO tools play a crucial role in this context by aiding newsrooms in identifying highly searched keywords and understanding audience interests. Ubersuggest provides insights into popular search terms, while Google Discover and CrowdTangle track trending stories and over performing social media posts, respectively. This intelligence enables the creation of news stories that resonate with public curiosity, leveraging frequently searched keywords to maximise story visibility and audience reach.

AI IN BROADCASTING

The demand for audio content has led newspapers to offer news summaries and reports in audio format, acknowledging the preferences of younger audiences. Aftenposten in Norway leads this trend, providing most articles in audio to accommodate children and visuall impaired readers. This shift is supported by advancements in mobile technology and a rise in podcast listenership. Radio Expres in Slovakia and two stations in the west of England are experimenting with synthetic voices for night shift coverage and hourly bulletins, highlighting the cost-effectiveness and realism of these AI-generated voices.

TV CHANNEL GENERATION

Going one stage further is NewsGPT, an experimental 24-hour television service where all the stories and all the presenters are generated by AI, without any human intervention. A small disclaimer says content may contai inaccuracies or unexpected outputs. NewsGPT, which can be watched via YouTube, bills itself as delivering news ‘without human biases’.

In addition, NLP applications also assist with factual claim-checking. They identify claims and match them with previously fact-checked ones. Reverseimage search is also used in verification.

AI IN ACTION: CASE STUDIES FOR PUBLISHERS

There’s no easy way to categorise this but the experiments across publishers with Gen AI have been vast, covering almost all aspects of news production and other functions like advertising. Here we detail just some of the interesting examples that have emerged recently, hoping you find some inspiration from all of them.

AMEDIA’S AI SANDBOX EMPOWERING LOCAL JOURNALISM

through AI In late February, Amedia, Norway’s second largest media conglomerate, unveiled its pioneering project: an AI sandbox. This initiative provides a secure space for journalists within the Norwegian local media group to explore and utilise generative AI for various efficiency enhancing tasks. The primary goal of this sandbox is to foster superior journalism by empowering reporters to use AI as an assistant. This approach allows journalists to dedicate more time to engaging with the community and covering vital, local stories, thereby enhancing the depth and breadth of local journalism.

Safeguarding Trust. The establishment of the AI sandbox by Amedia introduces a critical trust dimension to the use of AI in journalism. By creating this platform, Amedia positions itself as a protector of ethical AI utilisation for local journalism’s advancement. Markus Rask Jensen, the Director at Amedia, emphasises that trust is the cornerstone of journalism, and the responsible application of AI is essential for maintaining this trust. To guide local editors, who bear the ultimate responsibility for AI’s application in their newsrooms, Amedia has crafted a set of generative AI guidelines. These guidelines, embodied by the sandbox, offer journalists a secure environment to experiment with and harness AI without compromising ethical standards.

A Controlled Approach to Content Protection “We aim to explore the editorial use of AI within a protected setting. The overarching concern here is content security; our revenue is primarily subscription-driven. We’ve been intentionally cautious about integrating our paywalled content into large language models (LLMs). By managing our AI system, we maintain control over both the input and output,” Markus elucidates. Highlighting the feasibility of creating such a sandbox, Jensen notes, “Establishing a basic sandbox is not an enormous technical challenge. The core development of our project required 1-2 people approximately a month to complete, not even on a fulltime basis. It’s feasible for a newsroom to engage developers for about 10–15 hours to establish a secure generative AI environment akin to ours.”

SEMAFOR AND MICROSOFT INTEGRATING CHATGPT INTO NEWS REPORTING

As of February 2024, Microsoft has teamed up with the media outlet Semafor, co-founded by Ben Smith, former editorin- chief of Buzzfeed, on a new venture. This project introduces “Signals,” a feed sponsored by Microsoft with an investment described as “substantial” by The Financial Times. The initiative aims to produce a series of posts daily, focusing on breaking news and analysis.

UTILISING AI FOR RESEARCH IN JOURNALISM

The essence of this collaboration is the use of ChatGPT as a research tool in the journalistic process. Semafor plans to employ AI to quickly gather reports on breaking events from various international news sources, overcoming language barriers with translation tools. This approach allows for the inclusion of diverse perspectives from around the world, with journalists adding their own context and summaries to create a more nuanced story. Noreen Gillespie, now associated with Microsoft and a former journalist with AP, emphasised the importance of adopting AI tools for journalism’s future, as reported by The Financial Times. This initiative by Semafor and Microsoft demonstrates an attempt to balance the capabilities of AI with the irreplaceable value of human judgment in news reporting, aiming to provide a more enriched and globally aware news product.

MEDIACORP -INTEGRATION OF AI IN WEATHER FORECASTING

Mediacorp has introduced “AI Weather,” a software capable of analysing data to generate weather forecast clips, marking a significant step in its effort to integrate AI into its operations. At the recent WAN-IFRA Digital Media Asia conference, Wang Yin, Assistant Lead of the AI Strategy and Solutions team at Mediacorp News, Singapore, highlighted the organisation’s vision. “We see AI as a tool to boost efficiency and effectiveness while also fostering new capabilities, positioning it as an assistant rather than a replacement,” Wang explained. Before this technological advancement, the production of 11 daily forecast clips, in various languages, required the effort of three production teams. The adoption of AI has simplified these operationsconsiderably. AI bots now handle tasks such as data analysis, along with audio and video editing, and ensuring quality control. This evolution in workflow has freed Mediacorp journalists to focus more on creating innovative content.

SCHIBSTED’S STRATEGIC AI INTEGRATION

Schibsted, the Nordic region’s largest media conglomerate, is embracing AI to enhance its newsroom operations, including the creation of short-form article summaries, developing synthetic voice clones for text-to-speech functionality, and improving transcription processes. This initiative reflects the company’s commitment to leveraging AI for more efficient and engaging journalism.

Cultivating AI Expertise through Schibsted AI Academy In 2019, Schibsted took a significant step forward by establishing the Schibsted AI Academy, attracting over 600 staff members to its training programs. The organisation also developed the FAST (Fairness, Accountability, Sustainability, and Transparency) framework to navigate the unique challenges posed by AI, ensuring responsible development and implementation of AI technologies across its operations.

Engagement and Inclusion in AI Development Schibsted’s approach extends beyond the newsroom to include its audiences in the dialogue about AI’s role in the news ecosystem. Agnes Stenbom, head of IN/ LAB at Schibsted Sweden, highlights the importance of incorporating diverse perspectives into the development process, emphasising the value of interdisciplinary collaboration to foster innovation and cultural ownership of AI within the company. Schibsted engages a broad range of stakeholders through hackathons and internal events to spark enthusiasm and broaden AI knowledge, serving as a foundation for successful product ideation.

Personalisation and Audience Adaptation Anders Grimstad, head of foresight and emerging interfaces at Schibsted, advocates for AI-driven personalisation to tailor content more closely to individual audience needs. Speaking at the INMA World Congress in May 2023, Grimstad envisioned a future where content adapts to specific audience segments, such as immigrants or elderly readers, enhancing accessibility and relevance.

ENGAGING GENZ WITH INNOVATIVE FORMATS

At the intersection of journalism, technology, and democracy, Schibsted’s IN/LAB, in partnership with the Tinius Trust, explores new ways to connect with GenZ audiences. One such initiative, discussed by Stenbom at the World News Media Congress 2023 in Taipei, is the News Changemaker program. Thi innovative project transforms written news stories into AI-generated music, particularly rap songs, demonstrating an inventive method to engage young consumers. Tested on Aftonbladet, Sweden’s leading news platform, this approach received positive feedback, showcasing the potential of creative AI applications to attract and retain younger audiences.

NEWSQUEST- AUTOMATED DRAFTING FOR EFFICIENCY

Newsquest has built an in-house tool that drafts stories based on trusted information. A dashboard that Newsquest’s seven AI-assisted journalists use features a “Notes” input field on the left into which reporters feed the information they have gathered. Typical inputs might include a press release or quotes from a source. The generative AI then creates a story, displayed in the righthand panel, for the reporter to review. According to head of editorial AI Jody Doherty-Cove, this alleviates the burden of the mundane, but very important, tasks that are on reporters, freeing them up to do that human touch journalism thatreally resonates with the communities.

At the Artificial Intelligence in Journalism event held by NCTJ, he described the set-up as a “human in the loop system” – meaning, one that ensures a journalist checks AI-generated copy so reporters “have first and last word when it comes to anything that’s produced”.

Doherty-Cove calls it “a hyper-efficient copywriter” that drafts the story on certain instructions. It then goes back to the reporter who reviews the draft before publishing it. The journalists ensure the copy is accurate, exercise editorial judgement, and protect data and copyright and watch for any bias.

SAN FRANCISCO CHRONICLE’S CHOWBOT BLENDING AI WITH CULINARY JOURNALISM

The San Francisco Chronicle is experimenting with an AI-powered chatbot to help readers find recommended restaurants and specific dishes throughout the Bay area. The Chowbot is the news org’s “first real foray into audience-facing AI,” editor of emerging product Sarah Feldberg said to Nieman Lab. The Chronicle assures readers the Chowbot is trustworthy because of the source material: the paper’s own “constantly updated” guides and reviews. While the recommendations are AI-generated, every restaurant that the bot suggests has been vetted and visited by a real human on the Chronicle staff. Feldberg said early reader queries have included gluten-free options, vegetarian dim sum, fried chicken, and restaurants for a celebration.

The paper’s food and wine editor, Janelle Bitker views the chatbot as a “a readerfriendly tool” that “accentuates” the work of the food and wine team rather than replacing it. However, Chowbot can also get it wrong. “Despite Chowbot’s answers being grounded in the work of the Chronicle’s Food + Wine team, Chowbot is not immune to the hallucinations that plague all software that is powered by large language models, including OpenAI’s ChatGPT and Google’s Gemini,” Bitker writes. “Those two companies have thousands of employees working on this technology. If they encounter these issues, we likely will, too.”

REACH – AI ASSISTANT FOR FAST- PACED NEWSROOMS

Reach, the publisher of Mirror, Express, and Liverpool Echo, has introduced an AI tool called Gutenbot to assist journalists in quickly rewriting stories from other sites within its network. Gutenbot helps in generating new versions of articles by making changes like using synonyms or rephrasing passages while preserving the original meaning. It has also been used to rewrite police press releases and agency copy. Despite concerns about potential negative effects on audience trust due to lack of disclosure, Reach claims that Gutenbot has increased page views and article volume.

How it works Guten users are presented with an input panel, into which they paste the text they want to rewrite, and an output panel showing the new version. Any changes made by Guten are highlighted, and any errors in those changes are to be reported back to the company by the user. Error categories to be reported back to Reach include missed entities or quotes – things that were mentioned or said in the original story but omitted from Guten’s version – and hallucinated, i.e. fabricated, entities or quotes. After making any necessary amendments, Reach asks all journalists publishing a story written with Guten to add the URL to a spreadsheet so the company can keep a database of all its AI-involved articles. Guten also registers the differences between the story it suggested and the version that got published in order to inform its future edits.

Aiding, not Replacing, Journalistic Work Reach maintains that Gutenbot is meant to assist journalists rather than replace. As they said to Press Gazette, “editorial review will always be required to verify the accuracy of that text… It’s best to think of Gutenbot as a junior reporter whose draft copy tends to require some checks and tweaks by an experienced editor.” Editorial must review AI, “especiall when it comes to quotes, given the possible legal risks and ramifications involved.”

KSTA MEDIA (Germany) – INTEGRATING AI ACROSS PUBLISHING

Thomas Schultz-Homberg, CEO of KSTA Media, which publishes the likes of the daily newspaper Kölner Stadt-Anzeiger, has said that the publisher is now using AI for topic pages, taxonomy, contextual advertising, and personalisation. KSTA’s news websites are now rolling out a process by which AI curates 80% of the site, to make sure people stay as long as possible because they see things that interest them and perhaps surprise them, and human editors curate the remaining 20% – the top and most important stories of the day. Schultz-Homberg credited this work with a 6% increase in overall page views. Schultz-Homberg also told INMA WorldCongress they have used generative AI to

write horoscopes, published alongside a disclaimer. “We said if a man can invent them, a machine can do as well.”

TIME

The recent removal of TIME’s digital paywall has opened up a century of journalism for everyone. TIME’s archive contains 200 million words, a treasure trove of knowledge about a variety of historical figures. Mining it is a task well-suited for the new generation of AI technology. TIME trained the technology behind ChatGPT to produce quizzes based on stories hand-picked from the TIME archives. The user simply has to click on the article headline, next to the original issue date, to jump to the story on which each quiz is based.

INNOVATIVE GEN AI APPLICATIONS IN ADVERTISING BY THE NEW YORK TIMES AND TIMES INTERNET

The New York Times is venturing into the realm of Generative AI (GenAI) to harness GenAI to predict the most effective placement for ad campaigns based on their content, tailoring the ad’s message to fit seamlessly with the editorial environment. This method allows The Times to target niche audiences with unprecedented precision.

According to a spokesperson from The Times, this experimental product leverages GenAI to dynamically align a brand’s campaign message with relevant editorial content. It identifies the optimal contextual environments within The Times, broadening the scope beyond traditional contextual targeting. “Our new product will pinpoint readers most engaged with specific articles and target them throughout The Times, thus extending the campaign’s reach and refining its audience targeting strategy,” the spokesperson explained. This innovation means advertisers can set targeting criteria in real time, ensuring ads are always placed in suitable contexts.

The New York Times is currently inviting advertisers to beta test this technology in the upcoming quarter, with pricing details to be announced later. This project represents nine months of dedicated development work by The Times, aiming to offer a more nuanced and effective advertising solution.

TIMES INTERNET’s INTERACTIVE

Advertising Chat Product On the other side of the globe, Times Internet, India’s leading digital productsconglomerate and publisher of The Times of India—the world’s largestselling English daily—is experimenting with a novel advertising chat product. This product seeks to engage users more deeply by allowing interactive conversations within an ad. Imagine clicking on a banner ad for an electric vehicle and having a chat box open up where you can inquire about the car’s range, seat options, and more. This interactive approach not only keeps users engaged longer but also presents an opportunity for Times Internet to command premium advertising rates, thereby creating lasting value for advertisers. This advertising chat product, still under development, represents a significant innovation in digital advertising. By integrating conversational AI into ads, Times Internet is setting a new standard for user engagement and advertiser value.

HT MEDIA – PERSONALISED NEWS

Consumption with AI In a move towards ultra-personalised content consumption, India’s Hindustan Times Media has launched a chatbot on its financial news site, Mint. This chatbot allows readers to delve into business and economic news through an interactive query system. Yudhveer Mor, Chief Product and Technology Officer at HT Media in New Delhi, emphasises the importance of data source integrity, a common challenge with abstract language models. To ensure reliability, the chatbot is trained exclusively on articles from HT’s repertoire, focusing on content pertinent to Mint’s financial news specialisation.

Elevating User Engagement Mor notes the strategic limitation of chatbot inquiries to topics relevant to Mint, such as excluding weather-related queries. Users are prompted with a selection of sample questions reflecting current major news stories, encouraging engagement with the content. The chatbot has shown impressive results, with high click-through rates and user interaction, marking a significant shift in content consumption dynamics.

Beyond mere consumption, HT Media is exploring AI to enhance both reader and content team experiences. Mor discusses the dual potential of recommendation engines: guiding readers to their next article of interest and advising the content team on future writing directions. This tailored approach acknowledges the diverse needs and preferences of their audience. A notable challenge for HT Media, given that nearly 90% of site visitors are newcomers, is personalising content without prior user data. Mor shares how ChatGPT has been instrumental in predicting new readers’ interests, significantly boosting engagement. “We would put an article in ChatGPT and ask what they will read next. That started driving a lot of engagement for us.”

SEMAFOR – GENAI FOR CLASSIFYING INFORMATION

An example detailed INMA’s excellent Generative AI Intiative Blog run by Sonali Verma, again featuring Semafor. The digital outlet found it was hard to collect hate-crime statistics because they are defined and tracked in different ways by local U.S. police departments. So, Executive

Editor Gina Chua plugged a definition of hate crimes into a bot and was surprised by how well it could discover unwritten rules and relationships in articles. Chua suggests this capability could be used for “content moderation or finding violations of a given policy in a sea of complaints.” “Machine learning — where a computer “discovers” unspoken rules and relationships — can be an incredibly valuable method for sorting this sort of in information,” she writes.

BALTIMORE TIMES – GENAI FOR DIVERSITYI

The Baltimore Times has created a set of inclusive avatars and voices that allow its audiences to select from multiple diverse “personas” that reflect and represent the audiences they seek to serve. The move addresses a critical concern in artificial intelligence: the tendency of AI to replicate existing biases based on the data it’s trained on. By intentionally creating avatars and voices that celebrate diversity, The Baltimore Times is pioneering a more inclusive approach to AI in media. Utilising its Zing AI audio content extensions, The Baltimore Times has crafted a collection of avatars and voices designed to offer choices that resonate with different segments of its audience.

“You can pick whoever you want to read the story to you,” explains Paris Brown, associate editor of The Baltimore Times, highlighting the initiative’s aim to create a more authentic and engaging experience for the Black community and other underrepresented groups.

GENAI FOR INVESTIGATIVE JOURNALISM -CUSTOM GPT

Not an example by a major publisher, but we’re detailing here the work of Filipino journalist Jaemark Tordecilla, who created a custom GPT called COA Beat Assistant to advance watchdog journalism. Journalists in the Philippines rely on reports from the government’s Commission on Audit (COA). The audit reports usually provide leads that reporters could explore further to see if there are anomalies involving how public

funds are spent. Tordecilla showcased the new tool during a workshop for journalists organised by the Philippine Center for Investigative Journalism (PCIJ), attended by Sheila Coronel from Columbia J-School and the PCIJ’s founding executive director. Coronel tried the tool out on the audit reports for provinces in Mindanao in the southern Philippines, some of which are among the poorest areas in the country. Immediately, she noticed an item about the millions of pesos being spent by one area on Gender and Development and flagged it as a potential investigative story. Custom GPTs offer newsrooms the ability to automate the extraction of relevant information and generate summaries, bypassing the need for extensive machine learning training previously required for similar tasks. Tordecilla writes that he took 16 hours to build this particular tool that he expects will save reporters 80% of the time they spend on audit reports, combing through data.

In a talk at SXSW, an annual conference held in Austin, Texas, Zach Seward, the newly appointed director of AI initiatives at the New York Times, heading the building of a newsroom team charged with prototyping potential uses of machine learning (ML), enumerated the ways in which ML models can recognise patterns the human eye cannot see – patterns in text, data, images of data, photos on the ground, and photos taken from the sky. Here are his examples of how newsrooms have leveraged ML to tell stories.

GRIST AND THE TEXAS OBSERVER

The Texas Observer and Grist, a non-profit newsroom dedicated to environmental coverage, conducted an investigation of abandoned oil wells in Texas and New Mexico, numbering in the tens of thousands. Using data about the roughly 6,000 wells already marked by the states as abandoned as well as on tens of thousands of additional wells that seemed similar, Clayton Aldern of Grist employed statistical modelling to

compare conditions around the official list of abandoned wells with a much larger dataset of sites. They found at least 12,000 additional wells in Texas that were abandoned but off the books.

THE WALL STREET JOURNAL

Image recognition has proven to be a valuable asset in investigative journalism, as demonstrated by The Wall Street Journal in a series of articles looking at lead cabling in the US. Led by John Bebe-West, the investigation used Google Street View images to survey areas surrounding schools in New Jersey. A machine-learning model trained to identify lead cabling recognised the existence of a public health hazard: the investigation revealed extensive lead cabling in public areas nationwide, prompting on-site testing for lead exposure. Many of these tests confirmed alarmingly high levels of lead contamination.

THE NEW YORK TIMES

A report led by Ishaan Jhaveri at The Times is an example of a story that could not have otherwise been told without machine learning. The New York Times, which has employed satellite imagery for investigative purposes in the past, used machine learning to assess Israel’s bombing campaign in southern Gaza. The visual investigations team used AI to analyse satellite imagery for bomb craters. The AI tool identified over 1,600 potential craters, which were then manually reviewed to eliminate false positives. Craters spanning approximately 40 feet

or more, typically caused by 2,000-pound bombs, were further scrutinised. Finally, 208 craters were identified using satellite imagery and drone footage, indicating a significant threat to civilians seeking refuge in southern Gaza.

The Marshall Project

The Marshall Project, a non-profit newsroom looking at the US justice system, investigated banned book policies in state prisons. They compiled a database of banned books and obtained official policies from 30 state prison systems. To make these policies more accessible, journalists analysed each document to extract relevant information; Andrew Rodríguez Calderón utilised OpenAI’s GPT-4 to generate concise and reader-friendly summaries of the policies using specific prompts and these summaries were reviewed by journalists before publication. The use of a Large Language Model (LLM) allowed The Marshall Project to efficiently summarise policies from all 30 states.

Realtime

Created by Matthew Conlen and Harsha Panduranga, Realtime is a news site that offers regularly updated data feeds from various sources—financial markets, sports, government records, prediction markets, and public opinion polls. The data and charts are automated, with a focus on highlighting noteworthy trends, outliers, and significant growth or decline. Language Models (LLMs) help by providing contextual information, including headlines and brief descriptions surrounding the charts, to aid reader comprehension. These applications represent targeted uses of

LLMs, with transparency regarding the nature of the generated reports—it is made clear that bots wrote them.

WITI Recommends

Another website utilising an LLM in the background, WITI is a popular daily newsletter that has been active for five years and features numerous guest writers. Each edition often mentions products favoured by the writer. Noah Brier, one of the co-founders of WITI, saw an opportunity here in collecting and collating these recommendations. However, the challenge lay in extracting these recommendations from the unstructured prose of the newsletter, which sometimes included irrelevant links. Noah tackled this issue by devising a precise prompt for GPT-4, enabling the model to extract and categorise product recommendations across 1,500 editions of the newsletter in a display-ready format. As Sewad said in his talk, the most powerful use case for LLMs is “creating structure out of unstructured prose.” Indeed, “faced with the chaotic, messy reality of everyday life, LLMs are useful tools for summarising text, fetching information, understanding data, and creating structure.”

For news organisations wondering how to enter the fray, the POLIS report offers six steps towards an AI strategy:

- Get informed. See the LSE JournalismAI website for online introductory training, the AI Starter Pack, a Case Study hub and a series of reports

on innovation case studies. Other sources are available. - Broaden AI literacy. Everyone needs to understand the components of AI that are impacting journalism the most, because it will impact on everyone’s job – not just editorial, and not just the ‘tech’ people.

- Assign responsibility. Someone in your organisation should be given the responsibility of monitoring developments both in your workplace but also more widely, such as assigning AI innovation and R&D leads and keep a conversation going within your organisation about AI.

- Test, iterate, repeat. Experiment and scale but always with human oversight and management. Don’t rush to use AI until you are comfortable with the process. Always review the impact.

- Draw up guidelines. They can be general or specific. This is a useful learning process when done inclusively to engage all stakeholders. And be prepared to review and change them over time.

- Collaborate and network. There are many institutions such as universities or intermediaries like start-ups who are working in this field. Talk to other news organisations about what they have done. Generative AI technologies may present new opportunities for newsroom collaboration given the high enthusiasm about and accessibility of genAI tools.

As freely available generative AI tools flood the media landscape, many publishers are putting into place guidelines and codes of practice for its use in their newsrooms, as well as ideas about how it can be implemented to create efficiencies, save time, and aid journalists in their tasks rather than replace them entirely. The Washington Post Writing in a statement that AI “presents a significant opportunity to support the work of our journalists and enable us to better serve our readers,” Fred Ryan, Washington Post CEO, said

The Washington Post

has established an AI task force made up of many of the title’s senior leaders who will be “charged with establishing the company’s strategic direction and priorities for advancing our AI capabilities”. The Post has simultaneously created a small full-time team in an AI hub to“expedite our AI initiatives and foster cross-functional cooperation”. The hub will also spearhead its experimentation and proof-of-concept initiatives across the company and ensure everything stays within the strategies and guardrails set by

the AI taskforce. “This is only the first step in establishing AI as a priority opportunity for The Washington Post,” Ryan said. “As we learn more, we will adjust team structures and allocate resources that will deliver value and results.”

Mediahuis

Acknowledging the potential of AI to “make or break the newsroom”, Gert Ysebaert, Mediahuis CEO (Belgium), told the International News Media Association World Congress in New York that it was essential that newsrooms adapt to the drastically changing landscape. “[W[e have to think how can we implement this very fast and do this right, preferably to also mitigate the risks that are coming and the huge challenges,”

he said. It was also imperative, he said, that as media companies tested AI in a “controlled way”. Mediahuis has created an AI framework of seven principles about how to use the tech in the newsroom in an “ethical and responsible way” and “augment journalism, not replace journalism”. For example, the editor-in-chief is still “responsible for everything that’s published” with a human always “in the loop” and readers

must be informed if AI has been used for anything whether it is a section of an article or a summary.

Reuters US

Reuters has laid out four principles for their journalists when using AI to ensure they use the tech effectively while remaining the “world’s most trusted news organisation”. Alessandra Galloni, editor-in-chief (US), noted that Reuters has always “embraced new technologies” including using automation for extracting economic and corporate data: “The idea of autonomous news content may be new for some media companies, but it is a longstanding and essential practice at Reuters News.” “Second, Reuters reporters and editors will be fully involved in – and responsible for – greenlighting any content we may produce that relies on AI,” Galloni wrote. She also promised “robust disclosures” to the audience and said journalists must “remain vigilant that our sources of content are real. Our mantra: Be sceptical and verify.”

The Financial Times

The Financial Times, while using AI for summarising and visual creation (infographics, photos and diagrams) –with human oversight, acknowledges that generative AI models can produce false facts, references, links, images and even articles. In an op-ed, Roula Khalaf, editor,

promised that the FT’s journalism will continue to be “reported, written and edited” by humans, saying that trust matters above all else.

“The FT is also a pioneer in the business of digital journalism and our business colleagues will embrace AI to provide services for readers and clients and sustain our record of effective innovation,” Khalaf said. “Our newsroom too must remain a hub for innovation. It is important and necessary for the FT to have a team in the newsroom that can experiment responsibly with AI tools to assist journalists in tasks such as mining data, analysing text and images and translation. We won’t publish photorealistic images generated by AI but we will explore the use of AI-augmented visuals (infographics, diagrams, photos) and when we do we will make that clear to the reader. This will not affect artists’ illustrations for the FT.”

USA Today

Nicole Carroll, who stepped down as editor-in-chief of USA Today in May 2023, said the company had USA Today, like other organisations, has

created guidelines on generative AI, seen as “guideposts” rather than mandates. Nicole Carroll, who stepped down as editor-in-chief of USA Today in May 2023, recommended that other publishers assign someone to play with the new generative AI tools as soon as possible, while

it is free to do so with many of them. “See what it does, see what it doesn’t do, because we don’t know where this is going but we need to be ready,” she told the World Congress. “And so to be ready, you have to have some experience in there. So that’s one thing we can all do is go assign somebody to go in there and just play and create and see what they see – and then also get your own internal standards out today before something happens you’re not happy with.”

AI has already had an impact on journalism, the news industry, and by extension the public arena. The size and significance of this impact, however, will only become clear with time. This is especially true given ‘Amara’s law,’ the adage coined by American scientist and futurologist Roy Amara, according to which we overestimate the impact of technology in the short-term and underestimate the effect in the long run.

OpenAI only released ChatGPT in late November 2022 but by January 2023 they were claiming one million users. Working practices will clearly be the same and some jobs will be replaced. New ones will be created with different skills and responsibilities. Many journalists who have experimented with genAI can see how it can make their work much more efficient and add new dimensions to what they offer to the public.

As the reports we look at in this chapter have shown, AI is a volatile technology for news organisations. Most are aware of the inherent risks in AI technologies generally and the dangers of bias or inaccuracy. They are discovering that applying AI in news production has immediate possibilities, but how it will shape future practice is uncertain.New forms of AI have generated much speculation about models’ capacity to

produce news content, the accessibility and reliability of the data sources and techniques they use to generate text and images, and the potential for these sources to provide misleading information. They have also been discussed in terms of copyright issues, liability, and the existential risks they may pose.

GenAI has also however created the threat of ‘disintermediation’ for the news media. Why should people go to a news organisation for information if they can just prompt a chatbot? The POLIS survey suggests that many newsrooms are now working hard to answer that

question in a way that affirms the utility and importance of journalism as part of our social, economic and political lives. In a world of unreliable information, responsible, public service journalism must prove its value.

So ultimately, then, AI, Felix Simon concludes, constitutes a retooling of the news rather than a fundamental change in the needs and motives of news organisations. It does not impact the fundamental need to access and gather information, to process and package that information into “news,” to reach existing and new audiences, and to make money. However, it will undoubtedly play a transformative role in reshaping news work, from editorial to the business side, although the extent of this reshaping will be context- and task-dependent and will also be influenced by institutional incentives and decisions. Importantly, news organisations that have been able to invest in research and development, devote staff time, attract, and retain talent, and build infrastructure already have something of a head start in this reshaping of the news ecosystem. And as media organisations are reshaped, the public arena that is so vital to democracy and for which news organisations play a vital gatekeeper role will also see sea change. This will unfold depending on the actions of 1) executives, managers, journalists – those who wield

direct control over the conditions of news work, and 2) technology companies, regulatory bodies, and the public.

We can do no better than to present to you some important takeaways from a recent TOW report, “Artificial Intelligence in the News: How AI Retools, Rationalises, and Reshapes Journalism and the Public Arena”, which illuminate the way forward for AI and the media and brings home the major issues in a wonderfully succinct way

● Productivity gains from AI in the news will not be straightforward. The benefits of AI to the news will be staggered. They will incur costs in the early stages and necessitate changes at the organisational and strategic level.

● The adoption of AI in news organisations will not be frictionless. Regulation, resistance from news workers, audience preferences, and incompatible technological infrastructure are just some of the variables that will shape the speed at which news organisations adopt AI, and, by extension, the rate at which tangible effects on the news come into focus.

● AI will not be a panacea for the many deep-seated problems and challenges facing journalism and the public arena. Technology alone cannot fix intractable political, social, and economic ills. News organisations will continue to be forced to make a case for why they still matter in the modern news environment — and why they deserve audiences’ attention and money.

● The concentration of control over AI by a small handful of major technology companies must — and will — remain a key area of scrutiny. Control over infrastructure confers power.

● Developing frameworks to balance innovation — which is bound to continue — through AI in the news with concerns around issues like copyright and various forms of harm will remain a difficult and imperfect but necessary task. More concretely, certain AI technologies will gain traction:

● The Reuters trends and predictions report foresees experimental interfaces to the internet such as AR and VR glasses, lapel pins, and other wearable devices as being a feature of the year ahead. Additionally, existing voice activated devices such as headphones and smart speakers, as they get upgraded with AI technologies, will likely displace – or at least supplement – the smartphone in the medium term.

● The survey also indicates that AI bots and personal assistants will gain more traction in 2024 with up-to-date news. Important legal and ethical questions will be raised, however, as many of these bots will be personality- or journalist-driven as cloning technologies improve.

Finally, author Newman predicts that the battles between the AI Doomers and the AI Accelerationists will continue, although accelerationists will remain in the driving seat this year as governments struggle to understand and control the technology. Overall however, the story is clear– if 2023 was about coming to terms with generative AI, this year is all about the various ways in which it is making its way into newsrooms across the world. Stay tuned for more, because this wave has only just started. ◍

The Innovation in Media World Report is published every year by INNOVATION Media Consulting, in association with FIPP. The report is co-edited by INNOVATION President, Juan Señor, and senior consultant, Jayant Sriram